2017-18

September 20, 2017, 6-7:30 PM in 234 Moses Hall

Douglas Marshall (Carleton College)

Impurity of Methods: Finite Geometry in the Early Twentieth Century

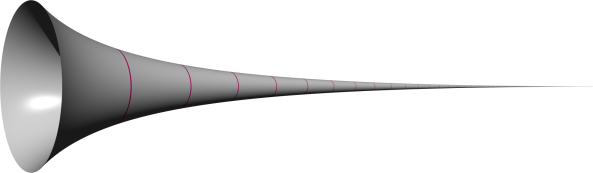

My talk aims to assess the reasonableness of various demands for purity of methods in mathematics by means of a historical case study of finite geometry in the early twentieth century. Work done in the foundations of algebra from 1900-1910 paved the way for corresponding advances in finite geometry. In particular, a geometric theorem on finite projective planes (Veblen and Bussey, 1906) was proved only with the help of an algebraic theorem on finite division rings (Wedderburn, 1905; Dickson 1905). In later years, several attempts were made to find a “pure” or “purely geometric” proof of Veblen and Bussey’s theorem, none of them entirely successful (Segre, 1958; Tecklenberg 1987). Given that it has already been proved, what exactly would be accomplished by the discovery of a “pure” or “purely geometric” proof of Veblen and Bussey’s theorem? What considerations favor or disfavor the devotion of research effort to a purely geometric development of finite geometry?

October 04, 2017, 6-7:30 PM in 234 Moses Hall

Barry Loewer (Rutgers University)

Perfectly Natural Properties and the Best System Accounts of Laws

David Lewis’ Best System account of fundamental laws requires there to be a preferred language relative to which the Best System (BS) is formulated. He proposes that the basic terms in this language (in addition to mathematical and logical terms) refer to what he calls “perfectly natural quantities.” Bas van Frassen objected that “perfectly natural properties” inolve a metaphysical posit that disconnects the Best System account from scientific practice and leads to an unwelcome skepticism about laws. In my talk I develop an alternative version of the BS account that avoids this objection and interestingly is neutral between Humean and non-Humean accounts of laws.

October 25, 2017, 6-7:30 PM in 234 Moses Hall

Robert May (UC Davis)

Sense

What is sense? Frege’s answer is this: Sense is what makes a reference thinkable such that in virtue of thinking this way an agent has grounds for making a judgement. In this talk, I explore this conception, which places sense at the crux of Frege’s account of judgement. The central claim is that sense is a composite notion, split between what makes a reference thinkable (mode of determination) and how we think of references (mode of presentation). These are related via grasp: an agent who grasps a mode of determination of a reference has a mode of presentation of that reference, and accordingly has grounds for making a judgement. This is crucial to understanding how Frege responded to the threat to logicism posed by the identity puzzle, viz. that a = b requires a special act of recognition in judgement. But it does, perhaps surprisingly, leave open the analysis of a = a.

March 14, 2018, 6-7:30 PM in 234 Moses Hall

Alan Hajek (Australian National University)

Most Counterfactuals Are Still False

I have long argued for a kind of ‘counterfactual scepticism’: most counterfactuals are false. I maintain that the indeterminism and indeterminacy associated with most counterfactuals entail their falsehood. For example, I claim that these counterfactuals are both false:

(Indeterminism) If the chancy coin were tossed, it would land heads.

(Indeterminacy) If I had a son, he would have an even number of hairs on his head at his birth.

And I argue that most counterfactuals are relevantly similar to one or both of these, as far as their truth-values go. I also have arguments from the incompatibility of ‘would’ and ‘might not’ counterfactuals, and from so-called ‘reverse-Sobel sequences’.

However, counterfactual reasoning seems to play an important role in science, and ordinary speakers judge many counterfactuals that they utter to be true. A number of philosophers have defended such judgments against counterfactual scepticism. David Lewis and others appeal to ‘quasi-miracles’; Robbie Williams to ‘typicality’; John Hawthorne and H. Orri Stefánsson to ‘counterfacts’, primitive counterfactual facts; Moritz Schulz to an arbitrary-selection semantics; Jonathan Bennett and Hannes Leitgeb to high conditional probabilities; Karen Lewis to contextually-sensitive ‘relevance’.

I argue against each of these proposals. A recurring theme is that they fail to respect certain valid inference patterns. I conclude: most counterfactuals are still false.

April 11, 2018, 6-7:30 PM in 234 Moses Hall

Hanti Lin (UC Davis)

Modes of Convergence to the Truth – Steps toward a Better Epistemology of Induction

Those who engage in normative or evaluative studies of induction, such as formal epistemologists, statisticians, and computer scientists, have provided many positive results for justifying (to a certain extent) various kinds of inductive inferences. But they all have said little about a very familiar kind of induction. I call it full enumerative induction, of which an instance is this: “We’ve seen this many ravens and they all are black, so all ravens are black”—without a stronger premise such as IID or a weaker conclusion such as “all the ravens observed in the future will be black”. I explain why those theorists of induction all say little about full enumerative induction. To remedy this, I propose that Bayesians be learning-theoretic Bayesians and learning-theorists be truly learning-theoretic—in three steps. (i) Understand certain modes of convergence to the truth as epistemic ideals for an inquirer to achieve where possible. (ii) Require the norm that an inquirer ought to achieve the highest achievable epistemic ideal. (iii) See whether full enumerative induction can be justified as—that is, proved to be—a necessary means for achieving the highest epistemic ideal achievable for tackling the problem of whether all ravens are black. The answer is positive, thanks to a new theorem, whose Bayesian version is proved as well. The technical breakthrough consists in introducing a mode of convergence slightly weaker than Gold’s (1965) and Putnam’s (1965) identification in the limit; I call it almost everywhere convergence to the truth, where the conception of “almost everywhere” is borrowed from geometry and topology. The talk will not presuppose knowledge of topology or learning theory.